Preamble

The direct way to a PCI passthrough virtual machines on Ubuntu 20.04 LTS. I try limit changes of the host operating system to a minimum, but provide enough details, that even Linux rookies are able to participate.

The final system will run Xubuntu 20.04 as host operating system(OS), and Windows 10 2004 as guest OS. Gaming is the main use-case of the guest system.

Unfortunately, the setup process can be pretty complex. It consists of fixed base settings, some variable settings and several optional (mostly performance) settings. In order to sustain readability of this post, and because I aim to use the virtual machine for gaming only, I minimized the variable parts for latency optimization. The variable topics itself are linked in articles – I hope this makes sense. 🙂

About this guide

This guide targets Ubuntu 20.04 and is based on my former guides for Ubuntu 18.04 and 16.04 host systems.

Attention!

A newer version based on Ubuntu 22.04 with a Windows 11 guest is out now!

However, this guide should be also applicable to Pop!_OS 19.04 and newer. If you wish to proceed with Pop!_OS as host system you can do so, just look out for my colorful Pop!_OS labels.

Breaking changes for older passthrough setups in Ubuntu 20.04

Kernel version

Starting with kernel version 5.4, the “vfio-pci” driver is no longer a kernel module, but build-in into the kernel. We have to find a different way instead of using initramfs-tools/modules config files, as I recommended in e.g. the 18.04 guide.

QEMU version

Ubuntu 20.04 ships with QEMU version 4.2. It introduces audio fixes. This needs attention if you want to use a virtual machine definition from an older version.

If you want to use a newer version of QEMU, you can build it on your own.

Attention!

QEMU version 5.0.0 – 5.0.0-6 should not be used for a passthrough setup due to stability issues.

Bootmanager

This is no particular “problem” with Ubuntu 20.04, but at least for one popular distro which is based on Ubuntu – Pop!_OS 20.04.

I think, starting with version 19.04, Pop!_OS uses systemd as boot manager, instead of grub. This means triggering kernel commands works differently in Pop!_OS.

Introduction to VFIO, PCI passthrough and IOMMU

Virtual Function I/O (or VFIO) allows a virtual machine (VM) direct access to a PCI hardware resource, such as a graphics processing unit (GPU). Virtual machines with set up GPU passthrough can gain close to bare metal performance, which makes running games in a Windows virtual machine possible.

Let me make the following simplifications, in order to fulfill my claim of beginner friendliness for this guide:

PCI devices are organized in so called IOMMU groups. In order to pass a device over to the virtual machine, we have to pass all the devices of the same IOMMU group as well. In a prefect world each device has its own IOMMU group — unfortunately that’s not the case.

Passed through devices are isolated, and thus no longer available to the host system. Furthermore, it is only possible to isolate all devices of one IOMMU group at the same time.

This means, even when not used in the VM, a device can no longer be used on the host, when it is an “IOMMU group sibling” of a passed through device.

Is this content any helpful? Then please consider supporting me.

If you appreciate the content I create, this is your chance to give something back and earn some good old karma.

Although ads are fun to play with, and very important for content creators, I felt a strong hypocrisy in putting ads on my website. Even though, I always try to minimize the data collecting part to a minimum.

Thus, please consider supporting this website directly 😘

Requirements

Hardware

In order to successfully follow this guide, it is mandatory that the used hardware supports virtualization and has properly separated IOMMU groups.

The used hardware

- AMD Ryzen7 1800x (CPU)

- Asus Prime-x370 pro (Mainboard)

- 2x 16 GB DDR4-3200 running at 2400MHz (RAM)

- Nvidia GeForce 1050 GTX (Host GPU; PCIE slot 1)

- Nvidia GeForce 1060 GTX (Guest GPU; PCIE slot 2)

- 500 GB NVME SSD (Guest OS; M.2 slot)

- 500 GB SSD (Host OS; SATA)

- 750W PSU

When composing the systems’ hardware, I was eager to avoid the necessity of kernel patching. Thus, the ACS override patch is not required for said combination of mainboard and CPU.

If your mainboard has no proper IOMMU separation you can try to solve this by using a patched kernel, or patch the kernel on your own.

BIOS settings

Enable the following flags in your BIOS:

Advanced \ CPU config - SVM Module -> enableAdvanced \ AMD CBS - IOMMU -> enable

Attention!

The ASUS Prime x370/x470/x570 pro BIOS versions for AMD RYZEN 3000-series support (v. 4602 – 5220), will break a PCI passthrough setup.

Error: “Unknown PCI header type ‘127’“.

BIOS version up to (and including) 4406, 2019/03/11 are working.

BIOS version from (and including) 5406, 2019/11/25 are working.

I used Version: 4207 (8th Dec 2018)

Host operating system settings

I have installed Xubuntu 20.04 x64 (UEFI) from here.

Ubuntu 20.04 LTS ships with kernel version 5.4 which works good for VFIO purposes – check via: uname -r

Attention!

Any kernel, starting from version 4.15, works for a Ryzen passthrough setup.

Except kernel versions 5.1, 5.2 and 5.3 including all of their subversion.

Before continuing make sure that your kernel plays nice in a VFIO environment.

Install the required software

Install QEMU, Libvirt, the virtualization manager and related software via:

sudo apt install qemu-kvm qemu-utils libvirt-daemon-system libvirt-clients bridge-utils virt-manager ovmf

Setting up the PCI passthrough

We are going to passthrough the following devices to the VM:

- 1x GPU: Nvidia GeForce 1060 GTX

- 1x USB host controller

- 1x SSD: 500 GB NVME M.2

Enabling IOMMU feature

Enable the IOMMU feature via your grub config. On a system with AMD Ryzen CPU run:

sudo nano /etc/default/grub

Edit the line which starts with GRUB_CMDLINE_LINUX_DEFAULT to match:

GRUB_CMDLINE_LINUX_DEFAULT="amd_iommu=on iommu=pt"

In case you are using an Intel CPU the line should read:

GRUB_CMDLINE_LINUX_DEFAULT="intel_iommu=on"

Once you’re done editing, save the changes and exit the editor (CTRL+x CTRL+y).

Afterwards run:

sudo update-grub

→ Reboot the system when the command has finished.

Verify if IOMMU is enabled by running after a reboot:

dmesg |grep AMD-Vi

[ 0.792691] AMD-Vi: IOMMU performance counters supported

[ 0.794428] AMD-Vi: Found IOMMU at 0000:00:00.2 cap 0x40

[ 0.794429] AMD-Vi: Extended features (0xf77ef22294ada):

[ 0.794434] AMD-Vi: Interrupt remapping enabled

[ 0.794436] AMD-Vi: virtual APIC enabled

[ 0.794688] AMD-Vi: Lazy IO/TLB flushing enabled

For systemd boot manager as used in Pop!_OS

One can use the kernelstub module, on systemd booting operating systems, in order to provide boot parameters. Use it like so:

sudo kernelstub -o "amd_iommu=on amd_iommu=pt"

Identification of the guest GPU

Attention!

After the upcoming steps, the guest GPU will be ignored by the host OS. You have to have a second GPU for the host OS now!

In this chapter we want to identify and isolate the devices before we pass them over to the virtual machine. We are looking for a GPU and a USB controller in suitable IOMMU groups. This means, either both devices have their own group, or they share one group.

The game plan is to apply the vfio-pci driver to the to be passed-through GPU, before the regular graphic card driver can take control of it.

This is the most crucial step in the process. In case your mainboard does not support proper IOMMU grouping, you can still try patching your kernel with the ACS override patch.

One can use a bash script like this in order to determine devices and their grouping:

#!/bin/bash

# change the 999 if needed

shopt -s nullglob

for d in /sys/kernel/iommu_groups/{0..999}/devices/*; do

n=${d#*/iommu_groups/*}; n=${n%%/*}

printf 'IOMMU Group %s ' "$n"

lspci -nns "${d##*/}"

done;source: wiki.archlinux.org + added sorting for the first 999 IOMMU groups

IOMMU Group 0 00:01.0 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-1fh) PCIe Dummy Host Bridge [1022:1452]

IOMMU Group 1 00:01.1 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) PCIe GPP Bridge [1022:1453]

IOMMU Group 2 00:01.3 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) PCIe GPP Bridge [1022:1453]

IOMMU Group 3 00:02.0 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-1fh) PCIe Dummy Host Bridge [1022:1452]

IOMMU Group 4 00:03.0 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-1fh) PCIe Dummy Host Bridge [1022:1452]

IOMMU Group 5 00:03.1 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) PCIe GPP Bridge [1022:1453]

IOMMU Group 6 00:03.2 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) PCIe GPP Bridge [1022:1453]

IOMMU Group 7 00:04.0 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-1fh) PCIe Dummy Host Bridge [1022:1452]

IOMMU Group 8 00:07.0 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-1fh) PCIe Dummy Host Bridge [1022:1452]

IOMMU Group 9 00:07.1 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) Internal PCIe GPP Bridge 0 to Bus B [1022:1454]

IOMMU Group 10 00:08.0 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-1fh) PCIe Dummy Host Bridge [1022:1452]

IOMMU Group 11 00:08.1 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) Internal PCIe GPP Bridge 0 to Bus B [1022:1454]

IOMMU Group 12 00:14.0 SMBus [0c05]: Advanced Micro Devices, Inc. [AMD] FCH SMBus Controller [1022:790b] (rev 59)

IOMMU Group 12 00:14.3 ISA bridge [0601]: Advanced Micro Devices, Inc. [AMD] FCH LPC Bridge [1022:790e] (rev 51)

IOMMU Group 13 00:18.0 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) Data Fabric: Device 18h; Function 0 [1022:1460]

IOMMU Group 13 00:18.1 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) Data Fabric: Device 18h; Function 1 [1022:1461]

IOMMU Group 13 00:18.2 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) Data Fabric: Device 18h; Function 2 [1022:1462]

IOMMU Group 13 00:18.3 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) Data Fabric: Device 18h; Function 3 [1022:1463]

IOMMU Group 13 00:18.4 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) Data Fabric: Device 18h; Function 4 [1022:1464]

IOMMU Group 13 00:18.5 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) Data Fabric: Device 18h; Function 5 [1022:1465]

IOMMU Group 13 00:18.6 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) Data Fabric: Device 18h; Function 6 [1022:1466]

IOMMU Group 13 00:18.7 Host bridge [0600]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) Data Fabric: Device 18h; Function 7 [1022:1467]

IOMMU Group 14 01:00.0 Non-Volatile memory controller [0108]: Micron/Crucial Technology P1 NVMe PCIe SSD [c0a9:2263] (rev 03)

IOMMU Group 15 02:00.0 USB controller [0c03]: Advanced Micro Devices, Inc. [AMD] X370 Series Chipset USB 3.1 xHCI Controller [1022:43b9] (rev 02)

IOMMU Group 15 02:00.1 SATA controller [0106]: Advanced Micro Devices, Inc. [AMD] X370 Series Chipset SATA Controller [1022:43b5] (rev 02)

IOMMU Group 15 02:00.2 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] X370 Series Chipset PCIe Upstream Port [1022:43b0] (rev 02)

IOMMU Group 15 03:00.0 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] 300 Series Chipset PCIe Port [1022:43b4] (rev 02)

IOMMU Group 15 03:02.0 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] 300 Series Chipset PCIe Port [1022:43b4] (rev 02)

IOMMU Group 15 03:03.0 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] 300 Series Chipset PCIe Port [1022:43b4] (rev 02)

IOMMU Group 15 03:04.0 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] 300 Series Chipset PCIe Port [1022:43b4] (rev 02)

IOMMU Group 15 03:06.0 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] 300 Series Chipset PCIe Port [1022:43b4] (rev 02)

IOMMU Group 15 03:07.0 PCI bridge [0604]: Advanced Micro Devices, Inc. [AMD] 300 Series Chipset PCIe Port [1022:43b4] (rev 02)

IOMMU Group 15 07:00.0 USB controller [0c03]: ASMedia Technology Inc. ASM1143 USB 3.1 Host Controller [1b21:1343]

IOMMU Group 15 08:00.0 Ethernet controller [0200]: Intel Corporation I211 Gigabit Network Connection [8086:1539] (rev 03)

IOMMU Group 15 09:00.0 PCI bridge [0604]: ASMedia Technology Inc. ASM1083/1085 PCIe to PCI Bridge [1b21:1080] (rev 04)

IOMMU Group 15 0a:04.0 Multimedia audio controller [0401]: C-Media Electronics Inc CMI8788 [Oxygen HD Audio] [13f6:8788]

IOMMU Group 16 0b:00.0 VGA compatible controller [0300]: NVIDIA Corporation GP107 [GeForce GTX 1050 Ti] [10de:1c82] (rev a1)

IOMMU Group 16 0b:00.1 Audio device [0403]: NVIDIA Corporation GP107GL High Definition Audio Controller [10de:0fb9] (rev a1)

IOMMU Group 17 0c:00.0 VGA compatible controller [0300]: NVIDIA Corporation GP104 [GeForce GTX 1060 6GB] [10de:1b83] (rev a1)

IOMMU Group 17 0c:00.1 Audio device [0403]: NVIDIA Corporation GP104 High Definition Audio Controller [10de:10f0] (rev a1)

IOMMU Group 18 0d:00.0 Non-Essential Instrumentation [1300]: Advanced Micro Devices, Inc. [AMD] Zeppelin/Raven/Raven2 PCIe Dummy Function [1022:145a]

IOMMU Group 19 0d:00.2 Encryption controller [1080]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) Platform Security Processor [1022:1456]

IOMMU Group 20 0d:00.3 USB controller [0c03]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) USB 3.0 Host Controller [1022:145c]

IOMMU Group 21 0e:00.0 Non-Essential Instrumentation [1300]: Advanced Micro Devices, Inc. [AMD] Zeppelin/Renoir PCIe Dummy Function [1022:1455]

IOMMU Group 22 0e:00.2 SATA controller [0106]: Advanced Micro Devices, Inc. [AMD] FCH SATA Controller [AHCI mode] [1022:7901] (rev 51)

IOMMU Group 23 0e:00.3 Audio device [0403]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) HD Audio Controller [1022:1457]

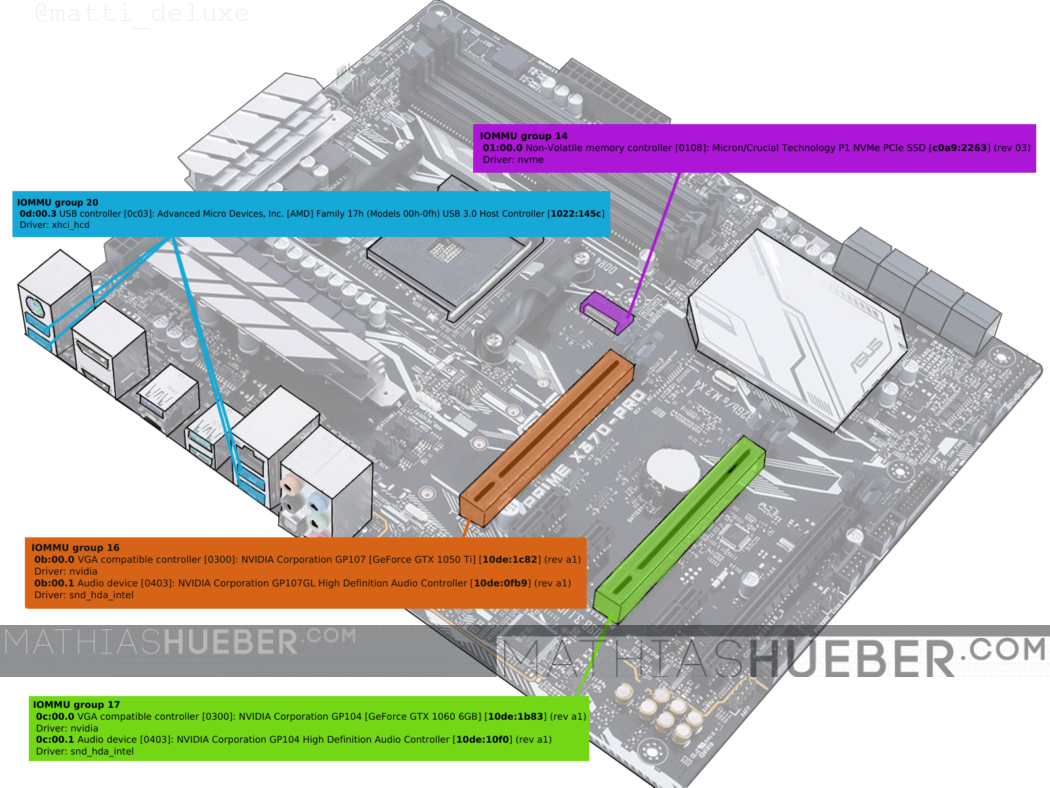

The syntax of the resulting output reads like this:

The interesting bits are the PCI bus id (marked dark red in figure1) and the device identification (marked orange in figure1).

These are the devices of interest:

NVME M.2

========

IOMMU group 14

01:00.0 Non-Volatile memory controller [0108]: Micron/Crucial Technology P1 NVMe PCIe SSD [c0a9:2263] (rev 03)

Driver: nvme

Guest GPU - GTX1060

=================

IOMMU group 17

0c:00.0 VGA compatible controller [0300]: NVIDIA Corporation GP104 [GeForce GTX 1060 6GB] [10de:1b83] (rev a1)

Driver: nvidia

0c:00.1 Audio device [0403]: NVIDIA Corporation GP104 High Definition Audio Controller [10de:10f0] (rev a1)

Driver: snd_hda_intel

USB host

=======

IOMMU group 20

0d:00.3 USB controller [0c03]: Advanced Micro Devices, Inc. [AMD] Family 17h (Models 00h-0fh) USB 3.0 Host Controller [1022:145c]

Driver: xhci_hcd

We will isolate the GTX 1060 (PCI-bus 0c:00.0 and 0c:00.1; device id 10de:1b83, 10de:10f0).

The USB-controller (PCI-bus 0d:00.3; device id 1022:145c) is used later on.

The NVME SSD can be passed through without identification numbers. It is crucial though that it has its own group.

Isolation of the guest GPU

In order to isolate the GPU we have two options. Select the devices by PCI bus address or by device ID. Both options have pros and cons.

Apply VFIO-pci driver by device id (via bootmanager)

This option should only be used, in case the graphics cards in the system are not exactly the same model.

Update the grub command again, and add the PCI device ids with the vfio-pci.ids parameter.

Run sudo nano /etc/default/grub and update the GRUB_CMDLINE_LINUX_DEFAULT line again:

GRUB_CMDLINE_LINUX_DEFAULT="amd_iommu=on iommu=pt kvm.ignore_msrs=1 vfio-pci.ids=10de:1b83,10de:10f0"Remark! The command “ignore_msrs” is only necessary for Windows 10 versions higher 1803 (otherwise BSOD).

Save and close the file. Afterwards run:

sudo update-grubAttention!

After the following reboot the isolated GPU will be ignored by the host OS. You have to use the other GPU for the host OS NOW!

→ Reboot the system.

Retrospective, for a systemd boot manager system like Pop!_OS 19.04 and newer, you can use:

sudo kernelstub --add-options "vfio-pci.ids=10de:1b80,10de:10f0,8086:1533"

Apply VFIO-pci driver by PCI bus id (via script)

This method works even if you want to isolate one of two identical cards. Attention though, in case PCI-hardware is added or removed from the system the PCI bus ids will change (also sometimes after BIOS updates).

Create another file via sudo nano /etc/initramfs-tools/scripts/init-top/vfio.sh and add the following lines:

#!/bin/sh

PREREQ=""

prereqs()

{

echo "$PREREQ"

}

case $1 in

prereqs)

prereqs

exit 0

;;

esac

for dev in 0000:0c:00.0 0000:0c:00.1

do

echo "vfio-pci" > /sys/bus/pci/devices/$dev/driver_override

echo "$dev" > /sys/bus/pci/drivers/vfio-pci/bind

done

exit 0Thanks to /u/nazar-pc on reddit. Make sure the line

for dev in 0000:0c:00.0 0000:0c:00.1Has the correct PCI bus ids for the GPU you want to isolate. Now save and close the file.

Make the script executable via:

sudo chmod +x /etc/initramfs-tools/scripts/init-top/vfio.sh

Create another file via sudo nano /etc/initramfs-tools/modules

And add the following lines:

options kvm ignore_msrs=1Save and close the file.

When all is done run:

sudo update-initramfs -u -k all

Attention!

After the following reboot the isolated GPU will be ignored by the host OS. You have to use the other GPU for the host OS NOW!

→ Reboot the system.

Verify the isolation

In order to verify a proper isolation of the device, run:

lspci -nnv

find the line "Kernel driver in use" for the GPU and its audio part. It should state vfio-pci.

0b:00.0 VGA compatible controller [0300]: NVIDIA Corporation GP104 [GeForce GTX 1060 6GB] [10de:1b83] (rev a1) (prog-if 00 [VGA controller])

Subsystem: ASUSTeK Computer Inc. GP104 [1043:8655]

Flags: fast devsel, IRQ 44

Memory at f4000000 (32-bit, non-prefetchable) [size=16M]

Memory at c0000000 (64-bit, prefetchable) [size=256M]

Memory at d0000000 (64-bit, prefetchable) [size=32M]

I/O ports at d000 [size=128]

Expansion ROM at f5000000 [disabled] [size=512K]

Capabilities: <access denied>

Kernel driver in use: vfio-pci

Kernel modules: nvidiafb, nouveau, nvidia_drm, nvidia

Congratulations, the hardest part is done! 🙂

Is this content any helpful? Then please consider supporting me.

If you appreciate the content I create, this is your chance to give something back and earn some good old karma.

Although ads are fun to play with, and very important for content creators, I felt a strong hypocrisy in putting ads on my website. Even though, I always try to minimize the data collecting part to a minimum.

Thus, please consider supporting this website directly 😘

Creating the Windows virtual machine

The virtualization is done via an open source machine emulator and virtualizer called QEMU. One can either run QEMU directly, or use a GUI called virt-manager in order setup, and run a virtual machine.

I prefer the GUI. Unfortunately not every setting is supported in virt-manager. Thus, I define the basic settings in the UI, do a quick VM start and “force stop” it right after I see the GPU is passed-over correctly. Afterwards one can edit the missing bits into the VM config via virsh edit.

Make sure you have your windows ISO file, as well as the virtio windows drivers downloaded and ready for the installation.

Pre-configuration steps

As I said, lots of variable parts can add complexity to a passthrough guide. Before we can continue we have to make a decision about the storage type of the virtual machine.

Creating image container if needed.

In this guide I pass my NVME M.2 SSD to the virtual machine. Another viable solution is to use a raw image container, see the storage post for further information.

Creating an Ethernet Bridge

We will use a bridged connection for the virtual machine. This requires a wired connection to the computer.

I simply followed the great guide from heiko here.

see the ethernet setups post for further information.

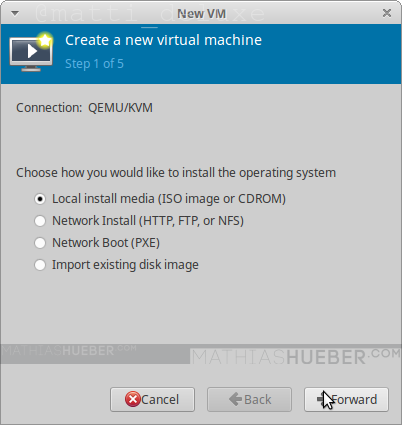

Create a new virtual machine

As said before we use the virtual machine manager GUI to create the virtual machine with basic settings.

In order to do so start up the manager and click the “Create a new virtual machine” button.

Step 1

Select “Local install media” and proceed forward (see figure 3).

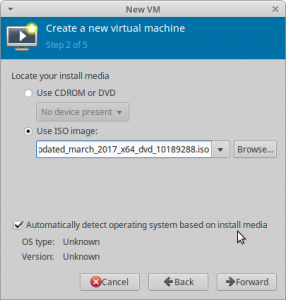

Step2

Now we have to select the windows ISO file we want to use for the installation (see figure3). Also check the automatic system detection. Hint:Use the button “browse local” (one of the buttons on the right side) to browse to the ISO location.

Step 3

Put in the amount of RAM and CPU cores you want to passthrough and continue with the wizard. I want to use 8 Cores (16 is maximum, the screenshot shows 12 by mistake!) and 16384 MiB of RAM in my VM.

Step 4

In case you use a storage file, select your previously created storage file and continue. I uncheck the “Enable storage for this virtual machine” check-box and add my device later on.

Step 5

On the last steps are slightly more clicks required.

Put in a meaningful name for the virtual machine. This becomes the name of the xml config file, I guess I would not use anything with spaces in it. It might work without a problem, but I wasn’t brave enough to do so in the past.

Furthermore make sure you check “Customize configuration before install”.

For the “network selection” pick “Specify shared device name” and type in the name of the network bridge we had created previously. You can use ifconfig in a terminal to show your Ethernet devices. In my case that is “bridge0”.

Remark! The CPU count in Figure7 is wrong. It should say 8 if you followed the guide.

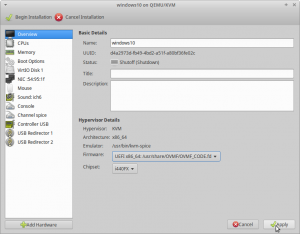

First configuration

Once you have pressed “finish” the virtual machine configuration window opens. The left column displays all hardware devices which this VM uses. By left clicking on them, you see the options for the device on the right side. You can remove hardware via right click. You can add more hardware via the button below. Make sure to hit apply after every change.

The following screenshots may vary slightly from your GUI (as I have added and removed some hardware devices).

Overview

On the Overview entry in the list make sure that for “Firmware” UEFIx86_64 [...] OVMF [...] is selected. “Chipset” should be Q35 see figure8.

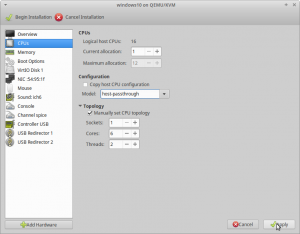

CPUs

For the “Model:” click in to the drop-down, as if it is a text field, and type in

host-passthrough

This will pass all CPU information to the guest. You can read the CPU Model Information chapter in the performance guide for further information.

For “Topology” check “Manually set CPU topology” with the following values:

- Sockets: 1

- Cores: 4

- Threads: 2

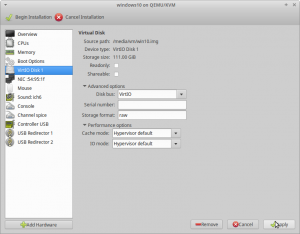

Disk 1, the Guests hard drive

Depending on your storage pre-configuration step you have to choose the adequate storage type for your disk (raw image container or passed-through real hardware). See the storage article for further options.

Setup with raw image container

When you first enter this section it will say “IDE Disk 1”. We have to change the “Disk bus:” value to VirtIO.

Setup with passed-through drive

Find out the correct device-id of the drive via lsblk to get the disk name.

When editing the VM configuration for the first time add the following block in the <devices> section (one line after </emulator> should be fitting).

<disk type='block' device='disk'>

<driver name='qemu' type='raw' cache='none' io='native' discard='unmap'/>

<source dev='/dev/nvme0n1'/>

<target dev='sdb' bus='sata'/>

<boot order='1'/>

<address type='drive' controller='0' bus='0' target='0' unit='1'/>

</disk>Edit the line

<source dev='/dev/nvme0n1'/>To match your drive name.

VirtIO Driver

Next we have to add the virtIO driver ISO, so it is used during the Windows installation. Otherwise, the installer can not recognize the storage volume we have just changed from IDE to virtIO.

In order to add the driver press “Add Hardware”, select “Storage” select the downloaded image file.

For “Device type:” select CD-ROM device. For “Bus type:” select IDE otherwise windows will also not find the CD-ROM either 😛 (see Figure10).

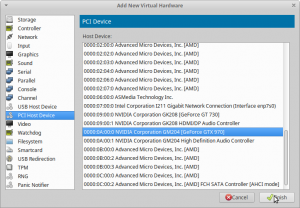

The GPU passthrough

Finally! In order to fulfill the GPU passthrough, we have to add our guest GPU and the USB controller to the virtual machine. Click “Add Hardware” select “PCI Host Device” and find the device by its ID. Do this three times:

- 0000:0c:00.0 for GeForce GTX 1060

- 0000:0c:00.1 for GeForce GTX 1060 Audio

- 0000:0d:00.3 for the USB controller

Remark: In case you later add further hardware (e.g. another PCIE device), these IDs might/will change – just keep in mind if you change the hardware just redo this step with updated Ids.

This should be it. Plugin a second mouse and keyboard in the USB ports of the passed-through controller (see figure2).

Hit “Begin installation”, a Tiano core logo should appear on the monitor connected to the GTX 1060. If a funny white and yellow shell pops-up you can use exit in order to leave it.

When nothing happens, make sure you have both CD-ROM device (one for each ISO windows10 and virtIO driver) in your list. Also check the “boot options” entry.

Once you see the Windows installation, use “force off” from virt-manager to stop the VM.

Is this content any helpful? Then please consider supporting me.

If you appreciate the content I create, this is your chance to give something back and earn some good old karma.

Although ads are fun to play with, and very important for content creators, I felt a strong hypocrisy in putting putting ads on my website. Even though, I always try to minimize the data collecting part to a minimum.

Thus, please consider supporting this website directly 😘

Final configuration and optional steps

In order edit the virtual machines configuration use: virsh edit your-windows-vm-name

Once your done editing, you can use CTRL+x CTRL+y to exit the editor and save the changes.

I have added the following changes to my configuration:

AMD Ryzen CPU optimizations

I moved this section in a separate article – see the CPU pinning part of the performance optimization article.

Hugepages for better RAM performance

This step is optionaland requires previous setup: See the Hugepages post for details.

find the line which ends with </currentMemory> and add the following block behind it:

<memoryBacking>

<hugepages/>

</memoryBacking>

Remark: Make sure

<memoryBacking>and<currentMemory>have the same indent.

Performance tuning

Troubleshooting

Removing Error 43 for Nvidia cards

This guide uses an Nvidia card as guest GPU. Unfortunately, the Nvidia driver throws Error 43, if it recognizes the GPU is being passed through to a virtual machine.

Update: With Nvidia driver v465 (or later) Nvidia officially supports the use of consumer GPUs in virtual environments. Thus, edits recommended in this section is no longer required.

I rewrote this section and moved it into a separate article.

Getting audio to work

After some sleepless nights I wrote a separate article about that – see chapter Pulse Audio with QEMU 4.2 (and above).

Removing stutter on Guest

There are quite a few software- and hardware-version combinations, which might result in weak Guest performance. I have created a separate article on known issues and common errors.

My final virtual machine libvirt XML configuration

<domain xmlns:qemu="http://libvirt.org/schemas/domain/qemu/1.0" type="kvm">

<name>win10-q35</name>

<uuid>b89553e7-78d3-4713-8b34-26f2267fef2c</uuid>

<title>Windows 10 20.04</title>

<description>Windows 10 18.03 updated to 20.04 running on /dev/nvme0n1 (500 GB)</description>

<metadata>

<libosinfo:libosinfo xmlns:libosinfo="http://libosinfo.org/xmlns/libvirt/domain/1.0">

<libosinfo:os id="http://microsoft.com/win/10"/>

</libosinfo:libosinfo>

</metadata>

<memory unit="KiB">16777216</memory>

<currentMemory unit="KiB">16777216</currentMemory>

<memoryBacking>

<hugepages/>

</memoryBacking>

<vcpu placement="static">8</vcpu>

<iothreads>2</iothreads>

<cputune>

<vcpupin vcpu="0" cpuset="8"/>

<vcpupin vcpu="1" cpuset="9"/>

<vcpupin vcpu="2" cpuset="10"/>

<vcpupin vcpu="3" cpuset="11"/>

<vcpupin vcpu="4" cpuset="12"/>

<vcpupin vcpu="5" cpuset="13"/>

<vcpupin vcpu="6" cpuset="14"/>

<vcpupin vcpu="7" cpuset="15"/>

<emulatorpin cpuset="0-1"/>

<iothreadpin iothread="1" cpuset="0-1"/>

<iothreadpin iothread="2" cpuset="2-3"/>

</cputune>

<os>

<type arch="x86_64" machine="pc-q35-4.2">hvm</type>

<loader readonly="yes" type="pflash">/usr/share/OVMF/OVMF_CODE.ms.fd</loader>

<nvram>/var/lib/libvirt/qemu/nvram/win10-q35_VARS.fd</nvram>

</os>

<features>

<acpi/>

<apic/>

<hyperv>

<relaxed state="on"/>

<vapic state="on"/>

<spinlocks state="on" retries="8191"/>

<vpindex state="on"/>

<synic state="on"/>

<stimer state="on"/>

<reset state="on"/>

<vendor_id state="on" value="1234567890ab"/>

<frequencies state="on"/>

</hyperv>

<kvm>

<hidden state="on"/>

</kvm>

<vmport state="off"/>

<ioapic driver="kvm"/>

</features>

<cpu mode="host-passthrough" check="none">

<topology sockets="1" cores="4" threads="2"/>

<cache mode="passthrough"/>

<feature policy="require" name="topoext"/>

</cpu>

<clock offset="localtime">

<timer name="rtc" tickpolicy="catchup"/>

<timer name="pit" tickpolicy="delay"/>

<timer name="hpet" present="no"/>

<timer name="hypervclock" present="yes"/>

</clock>

<on_poweroff>destroy</on_poweroff>

<on_reboot>restart</on_reboot>

<on_crash>destroy</on_crash>

<pm>

<suspend-to-mem enabled="no"/>

<suspend-to-disk enabled="no"/>

</pm>

<devices>

<emulator>/usr/bin/qemu-system-x86_64</emulator>

<disk type="block" device="disk">

<driver name="qemu" type="raw" cache="none" io="native" discard="unmap"/>

<source dev="/dev/nvme0n1"/>

<target dev="sdb" bus="sata"/>

<boot order="1"/>

<address type="drive" controller="0" bus="0" target="0" unit="1"/>

</disk>

<disk type="file" device="cdrom">

<driver name="qemu" type="raw"/>

<source file="/home/mrn/Downloads/virtio-win-0.1.185.iso"/>

<target dev="sdc" bus="sata"/>

<readonly/>

<address type="drive" controller="0" bus="0" target="0" unit="2"/>

</disk>

<controller type="usb" index="0" model="qemu-xhci" ports="15">

<address type="pci" domain="0x0000" bus="0x02" slot="0x00" function="0x0"/>

</controller>

<controller type="sata" index="0">

<address type="pci" domain="0x0000" bus="0x00" slot="0x1f" function="0x2"/>

</controller>

<controller type="pci" index="0" model="pcie-root"/>

<controller type="pci" index="1" model="pcie-root-port">

<model name="pcie-root-port"/>

<target chassis="1" port="0x10"/>

<address type="pci" domain="0x0000" bus="0x00" slot="0x02" function="0x0" multifunction="on"/>

</controller>

<controller type="pci" index="2" model="pcie-root-port">

<model name="pcie-root-port"/>

<target chassis="2" port="0x11"/>

<address type="pci" domain="0x0000" bus="0x00" slot="0x02" function="0x1"/>

</controller>

<controller type="pci" index="3" model="pcie-root-port">

<model name="pcie-root-port"/>

<target chassis="3" port="0x12"/>

<address type="pci" domain="0x0000" bus="0x00" slot="0x02" function="0x2"/>

</controller>

<controller type="pci" index="4" model="pcie-root-port">

<model name="pcie-root-port"/>

<target chassis="4" port="0x13"/>

<address type="pci" domain="0x0000" bus="0x00" slot="0x02" function="0x3"/>

</controller>

<controller type="pci" index="5" model="pcie-root-port">

<model name="pcie-root-port"/>

<target chassis="5" port="0x14"/>

<address type="pci" domain="0x0000" bus="0x00" slot="0x02" function="0x4"/>

</controller>

<controller type="pci" index="6" model="pcie-root-port">

<model name="pcie-root-port"/>

<target chassis="6" port="0x15"/>

<address type="pci" domain="0x0000" bus="0x00" slot="0x02" function="0x5"/>

</controller>

<controller type="pci" index="7" model="pcie-root-port">

<model name="pcie-root-port"/>

<target chassis="7" port="0x16"/>

<address type="pci" domain="0x0000" bus="0x00" slot="0x02" function="0x6"/>

</controller>

<controller type="pci" index="8" model="pcie-root-port">

<model name="pcie-root-port"/>

<target chassis="8" port="0x17"/>

<address type="pci" domain="0x0000" bus="0x00" slot="0x02" function="0x7"/>

</controller>

<controller type="pci" index="9" model="pcie-root-port">

<model name="pcie-root-port"/>

<target chassis="9" port="0x8"/>

<address type="pci" domain="0x0000" bus="0x00" slot="0x01" function="0x0"/>

</controller>

<controller type="pci" index="10" model="pcie-to-pci-bridge">

<model name="pcie-pci-bridge"/>

<address type="pci" domain="0x0000" bus="0x08" slot="0x00" function="0x0"/>

</controller>

<controller type="virtio-serial" index="0">

<address type="pci" domain="0x0000" bus="0x03" slot="0x00" function="0x0"/>

</controller>

<interface type="bridge">

<mac address="52:54:00:fe:9f:a5"/>

<source bridge="bridge0"/>

<model type="virtio-net-pci"/>

<address type="pci" domain="0x0000" bus="0x01" slot="0x00" function="0x0"/>

</interface>

<input type="mouse" bus="ps2"/>

<input type="keyboard" bus="ps2"/>

<hostdev mode="subsystem" type="pci" managed="yes">

<source>

<address domain="0x0000" bus="0x0c" slot="0x00" function="0x0"/>

</source>

<address type="pci" domain="0x0000" bus="0x04" slot="0x00" function="0x0"/>

</hostdev>

<hostdev mode="subsystem" type="pci" managed="yes">

<source>

<address domain="0x0000" bus="0x0c" slot="0x00" function="0x1"/>

</source>

<address type="pci" domain="0x0000" bus="0x05" slot="0x00" function="0x0"/>

</hostdev>

<hostdev mode="subsystem" type="pci" managed="yes">

<source>

<address domain="0x0000" bus="0x0d" slot="0x00" function="0x3"/>

</source>

<address type="pci" domain="0x0000" bus="0x06" slot="0x00" function="0x0"/>

</hostdev>

<memballoon model="virtio">

<address type="pci" domain="0x0000" bus="0x07" slot="0x00" function="0x0"/>

</memballoon>

</devices>

<qemu:commandline>

<qemu:arg value="-device"/>

<qemu:arg value="ich9-intel-hda,bus=pcie.0,addr=0x1b"/>

<qemu:arg value="-device"/>

<qemu:arg value="hda-micro,audiodev=hda"/>

<qemu:arg value="-audiodev"/>

<qemu:arg value="pa,id=hda,server=unix:/run/user/1000/pulse/native"/>

</qemu:commandline>

</domain>to be continued…

Sources

heiko-sieger.info: Really comprehensive guide

Great post by user “MichealS” on level1techs.com forum

Wendels draft post on Level1techs.com

Updates

- 2021-07-06 …. removed code 43 error handling

- 2020-06-18 …. initial creation of the 20.04 guide

- 2020-09-09 …. added further Pop!_OS remarks and clarified storage usage

Is this content any helpful? Then please consider supporting me.

If you appreciate the content I create, this is your chance to give something back and earn some good old karma.

Although ads are fun to play with, and very important for content creators, I felt a strong hypocrisy in putting ads on my website. Even though, I always try to minimize the data collecting part to a minimum.

Thus, please consider supporting this website directly 😘

BunnieofDoom

Good day Mathias,

Do you know if there is a way to fix the ACPI tables? A game I am currently playing seems to use that check to flag if your playing from inside a VM and disconnect you.

Mathias Hueber

Sorry, no idea. Which game is it though?

IGnatius T Foobar

Using this configuration, does the video output of the guest OS appear on the HDMI port of the guest GPU? And is the second keyboard/mouse required all the time or only during installation?

I am planning to attach the HDMI output to a spare port on my monitor, but I don’t want a separate keyboard and mouse.

Mathias Hueber

I use a kvm USB switch for mouse and keyboard. Depending on your use case (latency) there are other options too.

The video output for the guest comes always directly from guest gpu.

My monitor uses one hdmi for the host and display port for the guest input. I change input channels on the monitor directly to switch between host and guest. Depending on your monitor and gpu outputs you can choose what signals you use.

Patrick

Given the lack of space on my desk, I’d also like to share my keyboard and mouse between host and guest. Since I’m a total beginner with all this, which solution would you recommend?

I’ve found https://rokups.github.io/#!pages/kvm-hid.md and it looks like a good start, even though it’s already 2 years old. Not sure if that’s still the recommended way to do a single-keyboard setup?

Also when you say latency, do you mean between each key press and the host/guest noticing the event or do you mean latency only when switching devices from host to guest? I wouldn’t mind the latter as I don’t switch so often but if the mouse was off by a second in the guest constantly, it’s unusable for gaming.

Oh, and thanks for writing all this down, this is a great starting point for a vm-noob like me!

Mathias Hueber

Yes, it’s the latency between each key press. Thus, software kvm solutions are usually not a good idea for fast paced games.

I share my mouse and keyboard as well, but I use a kvm USB switch. It works pretty well. This eliminates the latency debt, at least for the hardware connection part.

sonata

hi, it seems lost libvirt XML configuration file in the end of article. could you please update and append it ? thank you.

Mathias Hueber

Thank you for the hint!

Stephan

“In this guide I pass my NVME M.2 SSD to the virtual machine.”

But how did you do that? Could you tell me?

Stephan

Ah it was late at night. So add device id to grub file, change apparmor and add device in virtmanager. Thank you for this guide. I think it is was of the best guides for passthrough on the internet.

You say “In order to isolate the GPU we have two options. Select the devices by PCI bus address or by device ID. Both options have pros and cons. ”

Do you maybe have more information about this, a link maybe?

Mathias Hueber

> So add device id to grub file, change apparmor and add device in virtmanager.

The NVME SSD can be passed through without identification numbers. It is crucial though that it has its own group. I also didn’t add the apparmor stuff. The storage article was written in combination with the 18.04 version – I guess I have to clean this up a bit.

> Do you maybe have more information about this, a link maybe?

If you select the device by device ID you can not distinguish between cards if you are using the same card, as both cards will have the same device ID

If you select the device by bus address you are basically selecting via the “possition” on the board. This lets you select one card even if both are identically (brand/model). The downside is that bus adresses change as soon as you add or remove hardware.

I hope this helps

Maxime

Thanks for the guide, very insightful !

A couple questions though :

– Can you achieve 240 FPS/Herz ?

– What is the input lag like ? (assuming the guest Keyboard & Mouse are plugged directly)

– Does it work with a dedicated Nvidia card for the guest and the onboard graphic chip available on the Intel CPUs ?

Eli

I have an issue when I try to Begin installation and it gives this error https://pastebin.com/n162y61b (group 14 is not viable). When I do lspci -nnv it shows

Kernel driver in use: vfio-pci

Kernel modules: radeon

like its supposed to so I don’t fully understand what is wrong. I am new to linux and would like help. Thanks for reading.

Mathias Hueber

The error message of lspci -nnv says:

Please ensure all devices within the iommu_group are bound to their vfio bus driver.Which devices are part of the IOMMU group? You have to unbind all devices which are part of the same IOMMU. In case your mainboard does not support propper IOMMU groupings you can tinker around with the ACS patch.

Eli

After using

dmesg |egrep group |awk ‘{print $NF” “$0}’ |sort -n

in the terminal,(https://pastebin.com/xNZVQiZ7) it looks like I failed to isolate the gpu from the group. Do you have a link or more information on how to isolate/unbind all devices? I’m sorry this is very new to me and I appreciate the help so far.

(In case it helps, I’m using a b450 aorus pro wifi with r7 2700x, Host 1050ti and Guest Radeon HD 4870)

Mathias Hueber

Have you done the step “Identification of the guest GPU” what is the output of the script?

Eli

https://pastebin.com/wti8i8aQ

Using this output, I followed the other steps and I copied what you did just with my identification number instead. But after looking at the group it is in, I think I did this incorrectly in some way. I did look through the other steps and double checked that I wrote the right id’s.

Mathias Hueber

Ok. Your plan is to pass the radeon card to the guest, correct?

Currently, your radeon card is in iommu group 14. As you can see in the output of the script, there are additional devices (12 to be precise) in this group. If you want to pass one or more device(s) from group 14, you have to pass all 12 to the guest. This is not feasible as for example your network adapter is part of this group.

A better group to pass to the guest is for example iommu group 15. Your Nvidia GPU is the only device in it – thus it is easy to pass. If you want to pass the radeon card you could try to switch the gpus on the mainboard.

One more thing, the USB controller from iommu group 18 looks good for passing it to the guest.

Eli

Sorry it has taken me so long to respond. I have booted up the vm, but I do not see a gpu there it shows up like a normal vm without a gpu. https://imgur.com/a/lUIIyZx

https://imgur.com/a/DfrrQnn I read the post by Amygdala but I don’t really understand the wiki that was linked. Thank you so much for the help.

Amygdala

I’ve arrived at the point where the VM boots, but it displays the video output in the Virt-Manager window rather than on it’s own dedicated monitor. Specs:

Ryzen 9 3900

32GB RAM

Host GPU: Nvidia Geforce GTX 1070

VM GPU: Nvidia Geforce RTX 2070 Super

VM Storage: Physical disk passed as block device.

The 2070 has 4 devices in its IOMMU group, and I’ve added all of those device ids to grub. On boot the VM GPU does appear to be grabbed by the vfio-pci kernel module. When I boot the VM, the VM GPU screen goes blank, but nothing else. The VM display output instead appears in the virt-manager window. I tried removing the Display Spice device but that didn’t seem to help. Any suggestions would be most welcome

Amygdala

Progress! Using info found in the Almighty Arch Wiki this gave me the display output on the VM monitor:

https://wiki.archlinux.org/index.php/PCI_passthrough_via_OVMF#“BAR_3:_cannot_reserve_[mem]”_error_in_dmesg_after_starting_VM

Now I assume I will need to run this every time I start the VM, so some sort of libvirt hook needs to be rolled. I had to execute these commands from an interactive root login, not from sudo. So that might be interesting..

Patti4832

Had the same problem as well with a RTX3070. But the commands didn’t work.

Ferdi

I was getting output from the gpu that I passed through but windows was really buggy I couldn’t open any windows.

I changed the “video” from QXL to virtio and it worked fine.

Sam

Hello Mathias, thank you for the detailed guide. Sorry if this is a foolish question. For background, I have one GPU and one sound card installed in the 2 PCIe x16 slots on my motherboard, i.e. no space to install another GPU.

When you say “In order to pass a device over to the virtual machine, we have to pass all the devices of the same IOMMU group as well.”, would it be possible to set it up such that the GPU and sound card used by the host, then when the VM launches are passed through to the guest system (i.e. can no longer be used by the host), becoming available to the host again when the VM quits?

Adam

Hi, thanks for the detailed guide, I’m trying it out on an Intel NUC with Nvidia eGPU. It has VT-d support, and the IOMMU groups look correct. I’m able to set the vfio-pci ids with grub, and after reboot the correct vfio driver is loaded (checking over SSH), but after the splash screen the ubuntu desktop login does not boot, just a black screen, it seems intel’s integrated graphics is not being used for the host (to be clear the monitor is plugged into the NUC not the eGPU). Any ideas? Thanks!

Adam Suban-Loewen

Update: Still haven’t resolved the boot issue, ignoring the intel integrated graphics. But found a workaround: boot with the eGPU off, login to desktop environment displayed with intel integrated graphics, then turn on the eGPU and confirm it’s using vfio-pci as the driver. Everything else worked as expected, benchmarking surprisingly well. Thanks for such a great guide! If anyone has any insight to what’s going on with boot, please let me know.

Renny

Hi, I have followed many of you tutorials with success, like setting up audio in a kvm and fixing error 43. I managed to get a win10 virtual machine with a passed through gpu that works flawlessly. Since i decided to switch to a low powered and small form factor gpu(gt 710) for host output I tried setting up an ubuntu guest vm with the better gpu passed through. Yet here the virtualized os seems not to use the gpu and instead list video output as llvmpipe. Any idea about how to get a guest linux system up and running with the passed through gpu?

BTW the system doesen’t seem to recognize any drivers to be installed

Kiloneie

I am able to get the GPU Passed trough get into that weird yellow console and from there it refuses to boot no matter what i try, it detects both CD ROMs, displays their names as VFIO driver and Win10 ISO, but it will NOT boot. With Q35 chipset, UEFI firmware, first you cannot use IDE with this, and your XML file shows the same CD ROMs are set to SATA Bus, and secondly i get an error when i begin installation “Unable to complete install: ‘unsupported configuration: per-device boot elements cannot be used together with os/boot elements'”. I have no clue what this means, and googling this issue just finds other console based VM setups while im about done with this one.

If i use the default other chipset i440FX and BIOS for firmware i will get booted into Win 10 installer, install VFIO drivers from inside it and get Win10 online, but the GPU is not passed trough. This works because with that chipset and firmware you can use IDE for the bus of the CD ROM, but you cannot do that with Q35 chipset and UEFI firmware.

Here is my XML which i edited based on your XML in effort to make it work, but i cannot: https://pastebin.com/0TiUwnPd

Mathias Hueber

Hello, I currently have no extra time for troubleshooting sessions. I would advice to start a related post on the /r/vfio sub-reddit. The community is pretty helpful.

Happy holidays!

Kiloneie

Also your use the networking post to make a bridge does NOT work, instead one can just use in the step by step setup of virt manager to just add the device in the last step which will automatically make a bridge, which works unlike the post on setting the bridge.

Mathias Hueber

Ohh, okay – sorry to here that. I had no problem with the article, yet. Good to know that the wizard works for you.

Well, you should inform Heiko about the problem in his article.

Colins

I have a major problem that I can not seem to fix, my idea was to use my intel integrated graphics for my host GPU and my rtx 2060 super for my Guest GPU. The IOMMU groups for the GPU is in group 1 with the pci bus ids and the device identification being 01:00.0_10de:1f06 for the vga controller and 01:00.1_10de:10f9 for the audio device. whenever I add the id of my rtx 2060 super into the grub which is 10de:10f9 and my audio controller 10de:1f06 and I try to boot I get a hanging prompt saying “0.475734] vfio-pci 0000:01:00.0: vgaarb: changed VGA decodes: olddecodes =io+mem,decodes=io+mem:owns=io+mem” When I deleted the vga controller part from the grub, it booted passing through my audio device I believe as I left it there in the prompt. Any Ideas on how to help me, It may be something stupid with the syntax or I got the IOMMU groups wrong but I would appreciate a reply of sorts.

Alexis.P

It is possible to make the same things but with windows on host os ?

C0D3 M4513R

I have a problem, that my Screen is off for the guest, when being longer switched to the host. The implication is, that I cannot just switch to linux for the day, because then windows would cease to function (The Display not recognising the input).

Also could not get virtio working, no matter what. (using an image for the host disk btw!)

I find windows boot errors just the most frustrating. You don’t know what’s happening. You just hope, that the stuff you do doesn’t make it worse.

Hope you can help!

C0D3 M4513R

Ok, seems like the copying of my host drive has gone wrong there. All solved now!

Mathias Hueber

Glad you figured it out!

Bernd

Hi Mathias

Thanks for this interesting guide.

Do you think that this is possible with a laptop where an NVIDIA M150 is present. As far as I know this card is only “attached” to the integrated graphics provided by the processor (Intel I7).

the Nvidia Card is found in its own IOMMU group as a “3D controller”.

Mathias Hueber

I would search if someone else did it before. Reddit.com/r/vfio is a good starting point. Or level1techs forum.

The main problem will be if the Mainboard supports it (not only the grouping)

DavidM

Hi Mathias,

I want to thank you for the great guide, it was a great intro into passthrough for me.

However, in the end I followed this guide: https://gitlab.com/Karuri/vfio as you can easily switch the passthrough on/off without having to reboot with updated grub each time.

For the error 43 folk, what resolved it for me (apart from the vendor_id stuff) was this:

# echo 1 > /sys/bus/pci/devices/0000\:00\:03.1/remove

# echo 1 > /sys/bus/pci/rescan

from https://wiki.archlinux.org/index.php/PCI_passthrough_via_OVMF

I haven’t tried it out, but I assume it should also work with Mathias’ guide.

Erol

Hi,

I need you help.

I was able to pass through Sapphire Radeon rx 470 to windows and Mac OSX Sur. I connect my second monitor to Windows vm under kvm and can see all perfectly. but In mac sur, I can see it see the card but is using default bogus card. How can I make mac osx to use my card? Thanks in advance.

Matt

I couldn’t get the guest OS to figure out that the radeon 7570 was present, despite it being properly installed in Device Manager. Two of the 3D programs told me I didn’t have the proper minimum video card.

Lukas

Hi Please help me, I’m not sure what to put here:

for dev in 0000:0c:00.0 0000:0c:00.1

my script output for my gpu

IOMMU Group 57 21:00.0 3D controller [0302]: NVIDIA Corporation GK110BGL [Tesla K40m] [10de:1023] (rev a1)

Lukas

okay figured it out, in my case it was: 0000:21:00.0

Mathias Hueber

Sorry for not replying. Lock down with two kids eats up my me time. Thanks for pinging back and making the solution available for others.

mert

‘internal error: vendor cannot be 0.’ kurulumu tamamlanamıyor.

Traceback (most recent call last):

File “/usr/share/virt-manager/virtManager/asyncjob.py”, line 75, in cb_wrapper

callback(asyncjob, *args, **kwargs)

File “/usr/share/virt-manager/virtManager/createvm.py”, line 2089, in _do_async_install

guest.installer_instance.start_install(guest, meter=meter)

File “/usr/share/virt-manager/virtinst/install/installer.py”, line 542, in start_install

domain = self._create_guest(

File “/usr/share/virt-manager/virtinst/install/installer.py”, line 491, in _create_guest

domain = self.conn.createXML(install_xml or final_xml, 0)

File “/usr/lib/python3/dist-packages/libvirt.py”, line 4034, in createXML

if ret is None:raise libvirtError(‘virDomainCreateXML() failed’, conn=self)

libvirt.libvirtError: internal error: vendor cannot be 0.

HELP ME PLS !!!

dic3jam

Mathias,

First of all thank you for putting in all of the effort to create this detailed guide and share it with the rest of us mortals. I have followed the whole thing and have successfully implemented a hardware pass-through to a Windows VM I have also followed the subsequent performance guide. The only thing I was unable to implement was Hugepages as I have Ubuntu 20.04 which does not allow you to set up hugepages in the traditional sense (I am going to work on it but I do not believe it is my problem). What I am interested to know is if you ever got to a point where you felt that your games were stable enough to play? I notice that my older games play fine but anything newer or first-person-shooter is a no-go.

Hardware:

Mobo: ROG B550-F

CPU: AMD Ryzen 7 1700

Mem: 32GB

Host OS: Ubuntu 20.04

My CPU utilization on the guest hits 100% with every game. Uniengine returned a score of 6000 with LOTs of lag. Could my processor be under-powered? The Ryzen 7 1700 is several generations old and only rated to 3000 ghz. Or is it worth the time to dig into the libvirt documentation and tweak the xml? What I am really looking to know is if I dive into this will I get any result? Or should I table this until I have a more modern processor that can handle the load?

Mathias Hueber

Hey, glad the guides are helpful.

About your question, I play all my games on the VM.

The first year wasn’t as smooth as expected, but since then I have played overwatch, gta5, league of legends, pubg and cod total warfare very stable and in reasonable quality… Well maybe all but pubg.

You are right though, the 1700 is aged quite a bit, and the first versions had problems especially with the Linux support.

I came to a point where wasnt able to optimize the vm any further for pubg. Thus, I ended upgrading, last year to the 3700x. The nice thing is I only had to buy the cpu as the Asus x370 still supported it.

To make the answer a bit broader: you should be able to get the same level of stability of your games in the virtual machine. However the 1700 cpu is retrospective not without flaws on a Linux host.

Matt

Why do you play all your games in the VM? Overwatch, GTAV, and LoL all work in Linux already. Overwatch especially often runs better on Linux than on Windows.

Maybe I’m making a false assumption that you care about Linux gaming, but you should do all your gaming on Linux unless the game does not work (or works but has major issues), and use the VM for those games only.

Also, how do you play PUBG since they were using BattlEye when you made this comment and BattlEye blocks VMs? Are you still able to play now that PUBG has their own kernel anticheat?

And cpu pinning and a few optimizations can make your VM perform like bare metal. I give my single-GPU passthrough VM 8 cores/16 threads of my 5900X, so it basically has a virtual 5800X. And every benchmark I run gives results that are in line with a highly-binned 5800X (which is weird, because the 5800X has only one CCX but the 5900X has 6 cores each on 2 CCX’s, so you’d think the latency across CCXs would maybe decrease performance, but at the same time the 5900X is higher-binned silicon and boosts higher, so I guess that makes up for it).

Before that I had an actual 5800X and gave it 6 cores/12 threads and got highly-binned 5600X performance in the VM.

jmginer

Hi, I have 3 monitors connected to the graphics card I want to passthrough, do I have to connect them to the host graphics card?

Mathias Hueber

I am not sure if I understand correctly.

If you want to use a monitor on the host you gave to connect it to the host gpu. If you want to use it on the guest you have to connected it to the guest gpu. If you want to use it on both, you connect it to both (your monitor needs enough inputs for that) does this help?

Marcell

Dear Mathias,

You description is amazing. I have almost achieved the GPU PCI pass through, but I’m struggling with the “please ensure all devices within the iommu_group are bound to their vfio bus driver” error message. I have seen that another reader asked you about this as well. I do understand the problem and tried to pass through the other devices (list see below) too, following your description (Apply VFIO-pci driver by PCI bus id (via script)), but with no success. The audio card and vga went well, but the others did not work. I have also tried the ACS override patch, but with no success (the group could not be really split). Do you have a hint or a link, where to search for a solution?

I would really appreciate your help.

Best Regards,

Marcell

List of the IOMMOMMU Group 1:

-e 00:01.0 PCI bridge [0604]: Intel Corporation Xeon E3-1200 v5/E3-1500 v5/6th Gen Core Processor PCIe Controller (x16) [8086:1901] (rev 07)

-e 01:00.0 VGA compatible controller [0300]: NVIDIA Corporation TU116M [GeForce GTX 1660 Ti Mobile] [10de:2191] (rev a1)

-e 01:00.1 Audio device [0403]: NVIDIA Corporation TU116 High Definition Audio Controller [10de:1aeb] (rev a1)

-e 01:00.2 USB controller [0c03]: NVIDIA Corporation TU116 USB 3.1 Host Controller [10de:1aec] (rev a1)

-e 01:00.3 Serial bus controller [0c80]: NVIDIA Corporation TU116 [GeForce GTX 1650 SUPER] [10de:1aed] (rev a1)

U Group:

Agustin

Dear Mathias, how are you?

This tutorial is great!

I have an Asus Tuf Gamming laptop, I did the host-passthrough even i solve the 43 error but i cant connect a monitor directly to mi GPU, so did you try it with Looking Glass (looking-glass.io/) ? Or what do you recomendme?

Best Regards.

Agustín.

dureal99d

https://mathiashueber.com/fighting-error-43-nvidia-gpu-virtual-machine/

follow these steps here and you will see success

Michel

When I’m installing the guest OS, I don’t get any output from the passthrough GPU. I only get output when I complete the installation process and after I download and install the Nvidia drivers. After that, I still don’t get any output during the boot up or shutdown process. I assume this happens because of some default setting on virt-manager. Is there any way to change this?

Derek

Hi Mathias,

I’m stuck at and would really appreciate your advice.

I’ve got two GPUs of the same model/ID. I tried your instructions in “Apply VFIO-pci driver by PCI bus id (via script)” but it only gets the audio device on the GPU to bind to vfio-pci and not the VGA compatible controller.

The line “/sys/bus/pci/devices/$dev/driver_override” seems to work as I looked at the file and could see ‘vfio-pci’ written.

However, with ‘/sys/bus/pci/drivers/vfio-pci/bind’, I can’t read it at all (permission denied, even using sudo).

I can’t find solutions so far that have worked so far and I’ve had no luck so far. Any idea what the problem might be?

Ammonite

Hi Derek,

I’m in the same situation. I found a way to make it work. I added in the `vfio.sh` script a line to prevent the use of the nvidia driver. Here is what I have in the script:

echo “vfio-pci” > /sys/bus/pci/devices/$dev/driver_override

echo “$dev” > /sys/bus/pci/drivers/nvidia/unbind

echo “$dev” > /sys/bus/pci/drivers/vfio-pci/bind

stepkas

Man, thanks a lot, you can’t even imagine how grateful i am for this advice!

Windows 10 virtual machine on PopOS 20.04 with GPU passthrough – CodeFraction

[…] https://mathiashueber.com/pci-passthrough-ubuntu-2004-virtual-machine/ […]

bob

I have two of the same gpu in my system and the vfio-id is same for both. I’m not sure how to configure this correctly. My attempt is below. I was able to add hardware and pick my “0b:00.0″ but all I get is the normal vm boot to gui console, no passthrough.

Here’s my /etc/default/grub

GRUB_CMDLINE_LINUX_DEFAULT=”amd_iommu=on iommu=pt kvm.ignore_msrs=1 vfio-pci.ids=10de:06fd”

And lspci -nnv

0a:00.0 VGA compatible controller [0300]: NVIDIA Corporation G98 [Quadro NVS 295] [10de:06fd] (rev a1) (prog-if 00 [VGA controller])

Subsystem: NVIDIA Corporation G98 [Quadro NVS 295] [10de:062e]

Flags: fast devsel, IRQ 123

Memory at ee000000 (32-bit, non-prefetchable) [size=16M]

Memory at e4000000 (64-bit, prefetchable) [size=64M]

Memory at ec000000 (64-bit, non-prefetchable) [size=32M]

I/O ports at f000 [size=128]

Expansion ROM at 000c0000 [disabled] [size=128K]

Capabilities: [60] Power Management version 3

Capabilities: [68] MSI: Enable- Count=1/1 Maskable- 64bit+

Capabilities: [78] Express Endpoint, MSI 00

Capabilities: [100] Virtual Channel

Capabilities: [128] Power Budgeting

Capabilities: [600] Vendor Specific Information: ID=0001 Rev=1 Len=024

Kernel driver in use: vfio-pci

Kernel modules: nvidiafb, nouveau, nvidia

0b:00.0 VGA compatible controller [0300]: NVIDIA Corporation G98 [Quadro NVS 295] [10de:06fd] (rev a1) (prog-if 00 [VGA controller])

Subsystem: NVIDIA Corporation G98 [Quadro NVS 295] [10de:062e]

Flags: fast devsel, IRQ 123

Memory at ea000000 (32-bit, non-prefetchable) [size=16M]

Memory at e0000000 (64-bit, prefetchable) [size=64M]

Memory at e8000000 (64-bit, non-prefetchable) [size=32M]

I/O ports at e000 [size=128]

Expansion ROM at eb000000 [disabled] [size=128K]

Capabilities: [60] Power Management version 3

Capabilities: [68] MSI: Enable- Count=1/1 Maskable- 64bit+

Capabilities: [78] Express Endpoint, MSI 00

Capabilities: [100] Virtual Channel

Capabilities: [128] Power Budgeting

Capabilities: [600] Vendor Specific Information: ID=0001 Rev=1 Len=024

Kernel driver in use: vfio-pci

Kernel modules: nvidiafb, nouveau, nvidia

bob

I should have read the article further.

After the vfio.sh steps I have the correct driver in use. Still no passthrough working but at least some progress.

0a:00.0 VGA compatible controller [0300]: NVIDIA Corporation G98 [Quadro NVS 295] [10de:06fd] (rev a1) (prog-if 00 [VGA controller])

Subsystem: NVIDIA Corporation G98 [Quadro NVS 295] [10de:062e]

Flags: bus master, fast devsel, latency 0, IRQ 122

Memory at ee000000 (32-bit, non-prefetchable) [size=16M]

Memory at e4000000 (64-bit, prefetchable) [size=64M]

Memory at ec000000 (64-bit, non-prefetchable) [size=32M]

I/O ports at f000 [size=128]

Expansion ROM at 000c0000 [virtual] [disabled] [size=128K]

Capabilities: [60] Power Management version 3

Capabilities: [68] MSI: Enable+ Count=1/1 Maskable- 64bit+

Capabilities: [78] Express Endpoint, MSI 00

Capabilities: [100] Virtual Channel

Capabilities: [128] Power Budgeting

Capabilities: [600] Vendor Specific Information: ID=0001 Rev=1 Len=024

Kernel driver in use: nvidia

Kernel modules: nvidiafb, nouveau, nvidia

0b:00.0 VGA compatible controller [0300]: NVIDIA Corporation G98 [Quadro NVS 295] [10de:06fd] (rev a1) (prog-if 00 [VGA controller])

Subsystem: NVIDIA Corporation G98 [Quadro NVS 295] [10de:062e]

Flags: fast devsel, IRQ 122

Memory at ea000000 (32-bit, non-prefetchable) [size=16M]

Memory at e0000000 (64-bit, prefetchable) [size=64M]

Memory at e8000000 (64-bit, non-prefetchable) [size=32M]

I/O ports at e000 [size=128]

Expansion ROM at eb000000 [disabled] [size=128K]

Capabilities: [60] Power Management version 3

Capabilities: [68] MSI: Enable- Count=1/1 Maskable- 64bit+

Capabilities: [78] Express Endpoint, MSI 00

Capabilities: [100] Virtual Channel

Capabilities: [128] Power Budgeting

Capabilities: [600] Vendor Specific Information: ID=0001 Rev=1 Len=024

Kernel driver in use: vfio-pci

Kernel modules: nvidiafb, nouveau, nvidia

bob

My nvidia nvs 295 card rom does not support UEFI.

That means I need to get a more recent card for this to work?

My configuration comes up correct with the 2nd card using vfio driver but the passthrough fails to display video on the monitor, it still goes to the gui console.

Leandro

Excellent post, I be able to use my video card on ubuntu vm.

Thank you.

Windows 10 with GPU passthrough crashes on Ubuntu 20.04 - Boot Panic

[…] helpful to debug the issue as obviously there is a lot of config involved. Initially I followed that guide, for 18.04 in the past and now for 20.04. Then I picked up different pieces here and […]

zzzhhh

Can you please write a step-by-step tutorial on how to set up GPU passthrough using Qemu 6.2 for:

host OS is Windows 10 and the guest OS is Ubuntu 20.04?

Thanks a million in advance.

Matt

So I’ve just started reading this (I had never heard of this guide before, I used joeknock90 and Karuri’s single-GPU passthrough guides when I set up my single-GPU passthrough VM). But so far there’s a glaring error, or maybe error is too strong of a word, but rather a misleading statement:

“This means, *even when not used in the VM*, a device can no longer be used on the host, when it is an “IOMMU group sibling” of a passed through device.”

This is not true at all. I pass through a USB controller – so I can have my keyboard, mouse, and headset all running like bare metal with hotplug, which isn’t available in regular non-PCIE USB device passthrough. It also has a USB hub and a USB 3 Gigabit ethernet adapter so I don’t have to use the virtio network drivers which are a mess especially for multiplayer games. So I have full, basically bare-metal real-life ethernet inside the VM. I also pass through my HD audio controller, which passes through the rear I/O audio, like the S/PDIF and optical ports, for my soundbar. Obviously I pass through my 3090, which is the only GPU in the system.

And yet I have every single one of those things available to the host when the VM isn’t running. So again:

“This means, **even when not used in the VM**, a device can no longer be used on the host, when it is an “IOMMU group sibling” of a passed through device.” is not true. It requires nothing but a line in a kvm.conf file, and a line or two in the start.sh and release.sh hook scripts for each IOMMU group device you want to pass through.

This isn’t only for single-GPU passthrough either, as I’ve already stated, it’s the best way to be able to use the same keyboard and mouse (and whatever other USB devices) in a VM, better than even a KVM switch. Hell I even used the VM to reset my aunt’s iPhone (which requires iTunes and all that). And I know a ton of people that do regular dual-GPU passthrough who do this same thing, even with their guest GPU, so they can use it for compute tasks while the VM isn’t running.

montclairtubbsr

Thank you for the post.

I have following error when I try to start the VM passing-through multiple identical GPUs (same brand, same model):

“Error starting domain: internal error: process exited while connecting to monitor”

The thing is, it worked for almost 1 week but now it doesn’t work any more and I can only pass-through 1 GPU at a time.

“$ sudo lspci -nnv” command returns:

01:00.0 VGA compatible controller [0300]: NVIDIA Corporation GA104 [GeForce RTX 3070 Ti] [10de:2482] (rev a1) (prog-if 00 [VGA controller])

Subsystem: CardExpert Technology GA104 [GeForce RTX 3070 Ti] [10b0:2482]

….

Kernel driver in use: vfio-pci

02:00.0 VGA compatible controller [0300]: NVIDIA Corporation GA104 [GeForce RTX 3070 Ti] [10de:2482] (rev a1) (prog-if 00 [VGA controller])

Subsystem: CardExpert Technology GA104 [GeForce RTX 3070 Ti] [10b0:2482]

…

Kernel driver in use: vfio-pci

03:00.0 VGA compatible controller [0300]: NVIDIA Corporation GA104 [GeForce RTX 3070 Ti] [10de:2482] (rev a1) (prog-if 00 [VGA controller])

Subsystem: CardExpert Technology GA104 [GeForce RTX 3070 Ti] [10b0:2482]

…

Kernel driver in use: vfio-pci

hunter

Managed to get this working on Xubuntu 21.10 with twin GTX 1060’s, thanks to this guide. I rewrote the script for handling initramfs (had to do it by bus rather than id, since the cards are identical). Worked like a charm. Seems that huge pages throws a memory allocation error, haven’t looked into it yet.

Henrique

Will this amazing guide be updated to Ubuntu 22.04 LTS?

Frank cap

When you say that “ After the following reboot the isolated GPU will be ignored by the host OS. You have to use the other GPU for the host OS NOW!” do you mean that you HAVE to use the other gpu for the host for the pass through steps to work or that you have to use the other gpu for the host if you want to use the monitor ( ie you could ssh into the system)?

Thank you

David

Thank you so much for this guide!

Roy

Sadly, the 22.04 version of the guide does not load right now. I get a “500 Internal Server Error” when I open the page….

Mathias Hueber

Thank you for the pointer, it should be fixed

Update: The problem was WP-spamshield in combination with php8. In the current version is still uses create_function. This solved it: https://wordpress.org/support/topic/php8-call-to-undefined-function-create_function/

Roy

Ah– there is also a community wiki answer on askubuntu:

https://askubuntu.com/questions/1406888/ubuntu-22-04-gpu-passthrough-qemu

Christian

Will there be a planned update to this guide for ubuntu 23.04? I followed this guide and was successful with setting up on 22.04. However since I’ve updated to 23.04 the nvidia driver always grabs both of my nvidia GPUs, as well as dmesg teling me that “on” isn’t recognized as a vallid option for amd_iommu.

Mathias Hueber

Which Methode are you using for the isolation?

Try the kernelstub method and report back 🙂

Christian

After trying for several days, I could not get kernelstub method to work for my hardware setup on ubuntu 23.04. I was able to get 2 out of the 4 PCI express devices to bind to the vfio driver, using initramfs, and modprobe.d config. I have no idea how to make the xhci_pci driver or the snd_hda_intel (HD Audio) driver load after vfio driver.